🚜 AgriGS-SLAM: Orchard Mapping Across Seasons via Multi-View Gaussian Splatting SLAM

Abstract

Agricultural robots operating in orchards face a uniquely difficult perception problem: rows of trees look nearly identical, canopy appearance shifts dramatically across seasons, and wind-driven foliage creates constant scene perturbations — all while the platform must localize and map in real time. Existing 3D Gaussian Splatting SLAM methods were designed for controlled, static indoor environments and transfer poorly to these conditions.

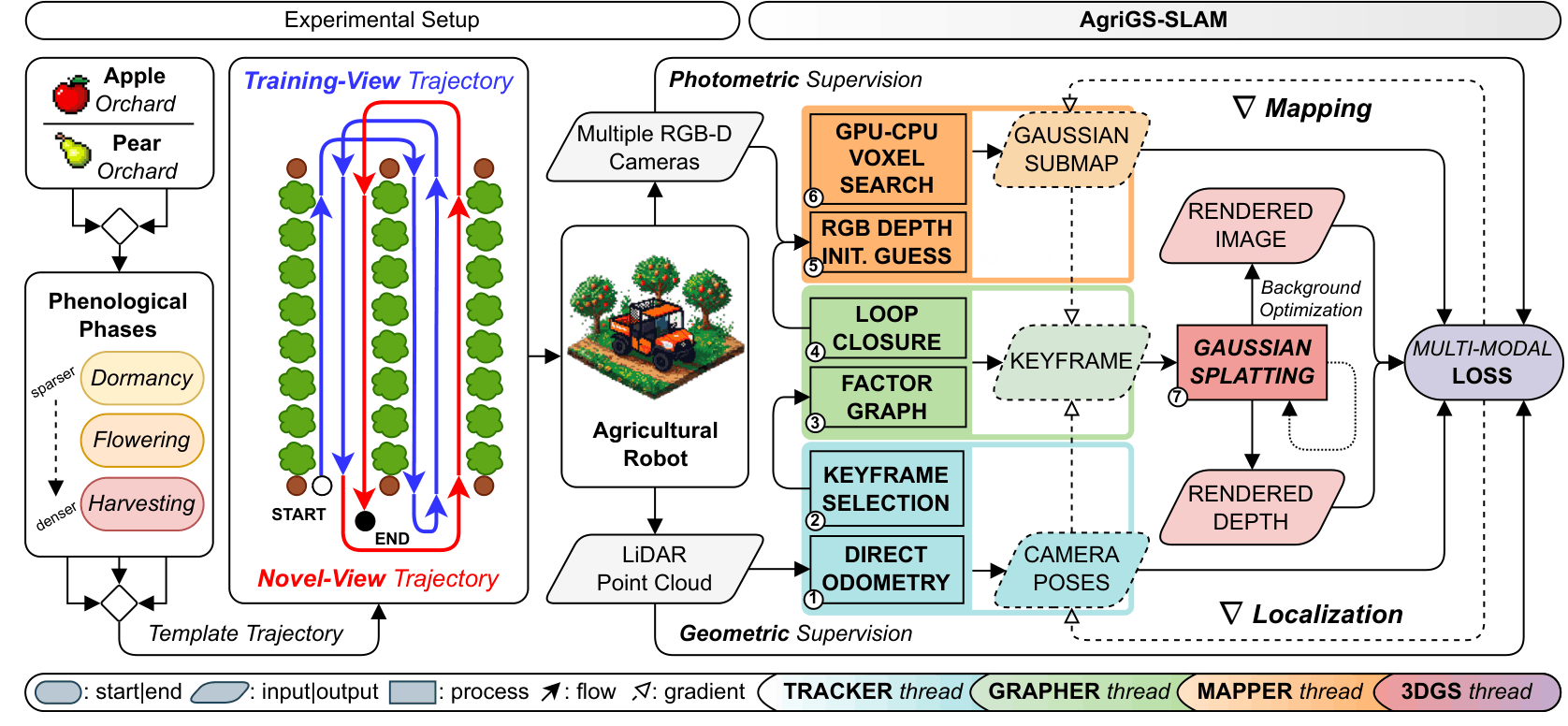

We present AgriGS-SLAM, a unified Visual–LiDAR SLAM framework that couples direct LiDAR odometry and loop closures with multi-camera 3D Gaussian Splatting (3DGS) rendering. A batch rasterization strategy over three synchronized RGB-D cameras recovers orchard structure even under heavy occlusions and limited lateral viewpoints, while a gradient-driven map lifecycle — executed asynchronously between keyframes — preserves geometric detail and keeps GPU memory bounded throughout long field traversals. Pose refinement is driven by a probabilistic KL depth-consistency term derived from LiDAR measurements, back-propagated through differentiable camera projection to tighten the geometry–appearance coupling.

We validate the system on a tractor-mounted platform in apple and pear orchards across three phenological stages — dormancy, flowering, and harvesting — using a standardized trajectory protocol that evaluates both training-view reconstruction and novel-view synthesis. The latter deliberately probes generalization beyond the optimized path, exposing the 3DGS overfitting that inflates scores in prior SLAM benchmarks. Across all seasons and orchards, AgriGS-SLAM consistently outperforms Photo-SLAM, Splat-SLAM, PINGS, and OpenGS-SLAM in rendering fidelity and trajectory accuracy, while operating in real time on-tractor.

Key Contributions

- 🔗 Unified gradient-driven Visual–LiDAR 3DGS-SLAM. End-to-end real-time formulation jointly solving ∇ Localization and ∇ Mapping: differentiable pose optimization with a LiDAR-guided KL depth-consistency loss, and incremental 3DGS driven by image-space gradients for bounded-memory, high-fidelity rendering across long agricultural trajectories.

- 📷 First real-time multi-camera 3DGS-SLAM for outdoor environments. Batch rasterization across three synchronized RGB-D cameras overcomes occlusion and viewpoint limitations of single-camera systems in repetitive orchard rows.

- 🌱 Cross-seasonal benchmark with a novel-view evaluation protocol. Extensive comparison against four state-of-the-art baselines in apple and pear orchards across dormancy, flowering, and harvesting, using a standardized training/novel-view split that prevents 3DGS overfitting from masking localization failures.

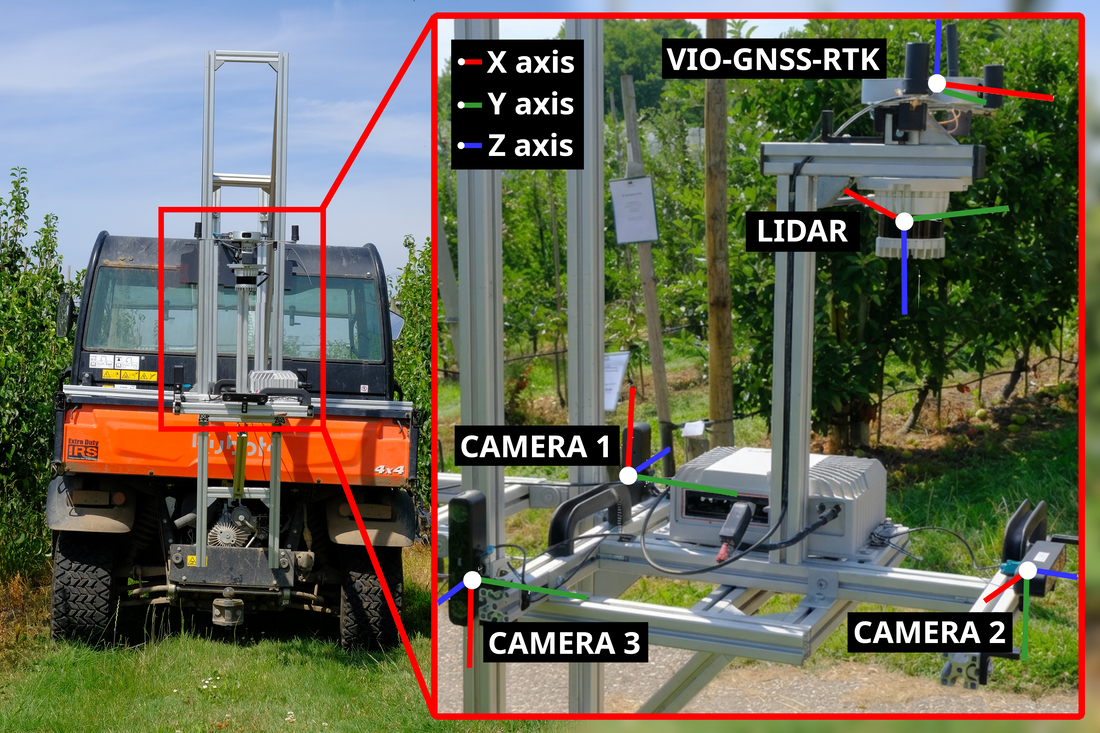

Field Platform

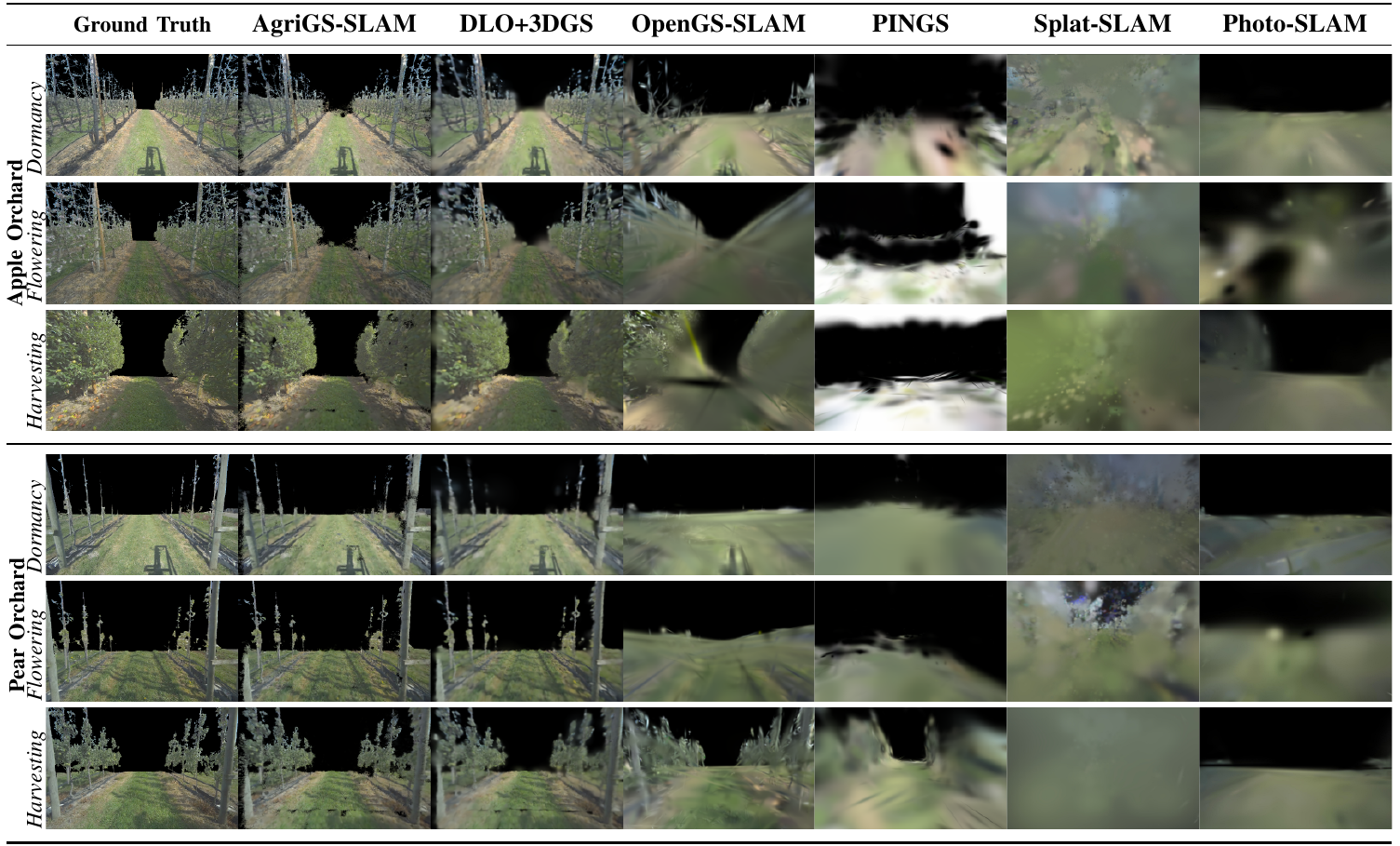

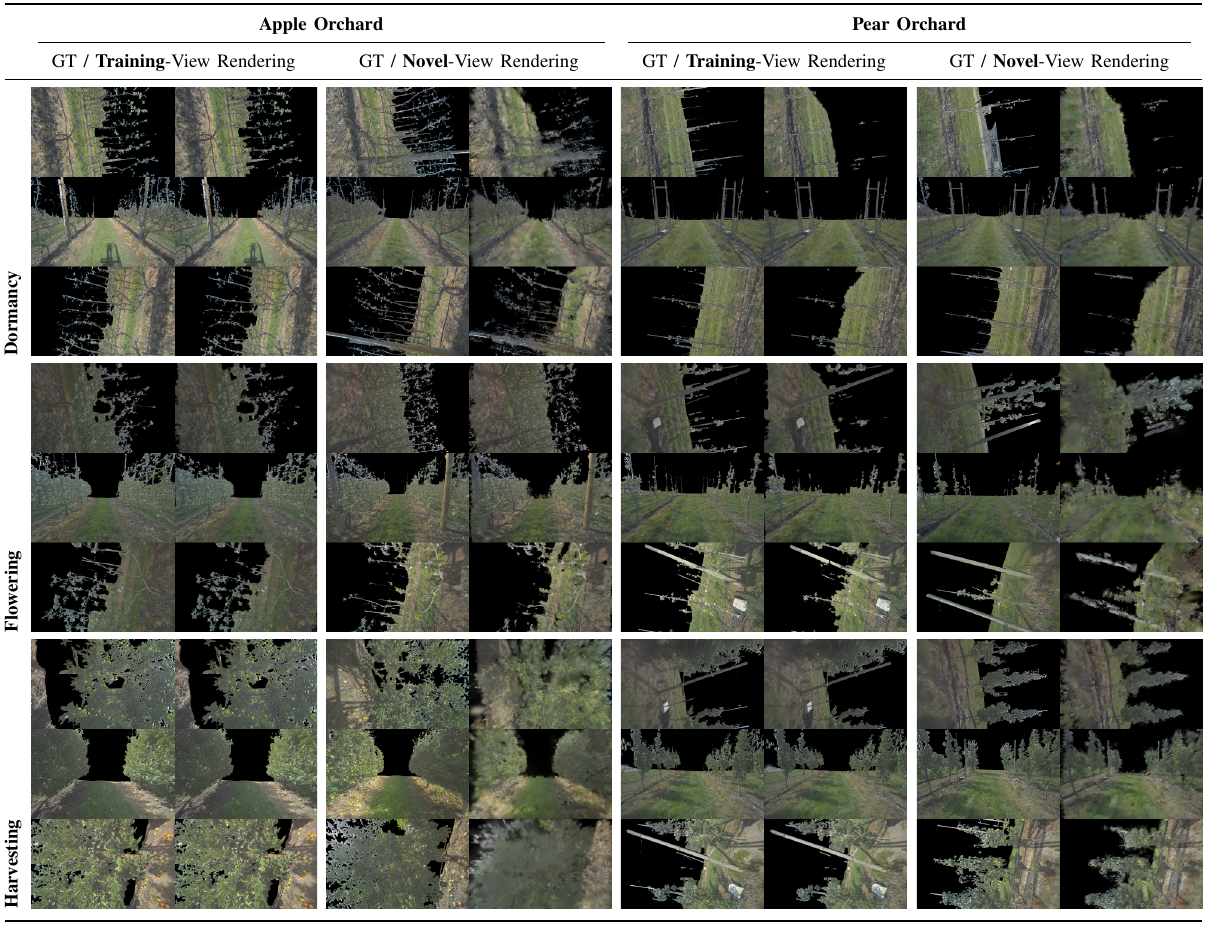

Qualitative Comparison

Training-view renderings across apple and pear orchards in all three phenological stages, for AgriGS-SLAM and all baselines.

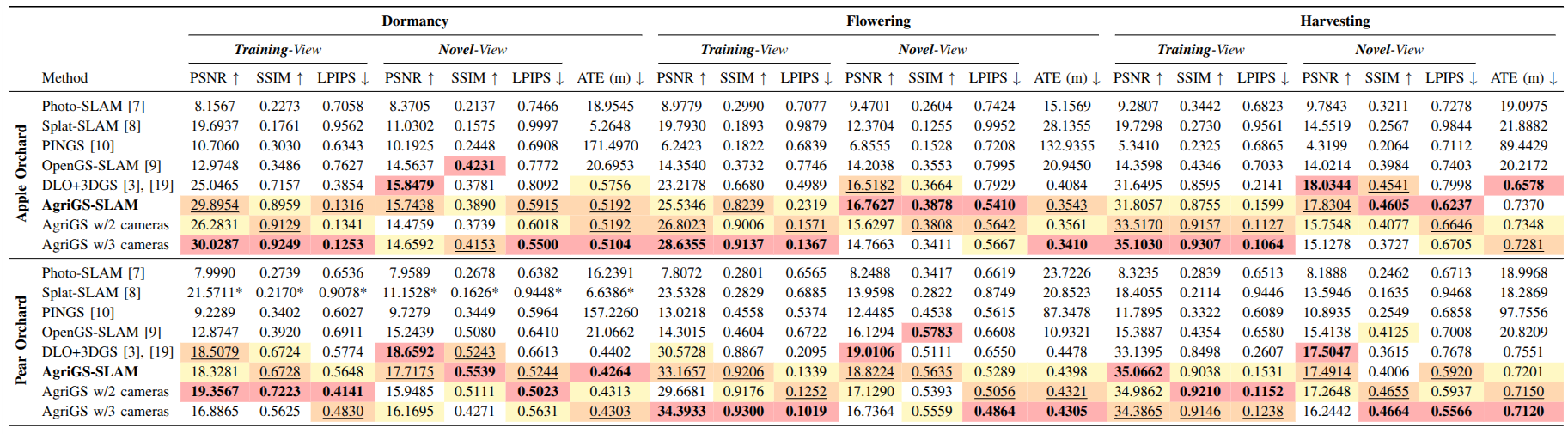

Quantitative Results

Training- and novel-view PSNR / SSIM / LPIPS and Absolute Trajectory Error (ATE, m) across both orchards and all three phenological stages.

Full table including ablation variants is available in the arXiv paper and on IEEE Xplore.

Training vs. Novel-View Rendering

Our standardized protocol evaluates scenes from held-out novel viewpoints, exposing 3DGS overfitting that inflates scores in training-only benchmarks.

Dataset

Multi-sensor recordings in apple and pear orchards across dormancy, flowering, and harvesting. Each sequence includes synchronized RGB-D images (3× ZED X), 32-channel LiDAR point clouds (Ouster OS0), and RTK ground-truth trajectories (Fixposition VIO–GNSS).

BibTeX

@article{usuelli2026agrigs,

author={Usuelli, Mirko and Rapado-Rincon, David and Kootstra, Gert and Matteucci, Matteo},

journal={IEEE Robotics and Automation Letters},

title={AgriGS-SLAM: Orchard Mapping Across Seasons via Multi-View Gaussian Splatting SLAM},

year={2026},

volume={11},

number={6},

pages={7102-7109},

doi={10.1109/LRA.2026.3685453}

}Acknowledgments

The authors thank the Fruit Research Center (FRC) in Randwijk for access to the orchards.

Mirko Usuelli's work was carried out within the Agritech National Research Center and funded by the European Union — Next-GenerationEU (PNRR – M4C2, Inv. 1.4 – D.D. 1032 17/06/2022, CN00000022).

Contributions from Matteo Matteucci, Gert Kootstra, and David Rapado-Rincon were co-funded by the European Union — Digital Europe Programme (AgrifoodTEF, GA Nº 101100622).